Can a Contract Freeze the Law on Autonomous Weapons?

- By Jeremy Howard and Luke Versweyveld, co-founders of Virgil Law. Jeremy is the Founding CEO of Answer.AI and inventor of the first LLM. Luke is the CEO of Virgil, and an expert on contract law.

Background

OpenAI recently published Our agreement with the Department of War, in which they included this important contractual language (emphasis ours):

The Department of War may use the AI System for all lawful purposes, consistent with applicable law, operational requirements, and well-established safety and oversight protocols. The AI System will not be used to independently direct autonomous weapons in any case where law, regulation, or Department policy requires human control, nor will it be used to assume other high-stakes decisions that require approval by a human decisionmaker under the same authorities. Per DoD Directive 3000.09 (dtd 25 January 2023), any use of AI in autonomous and semi-autonomous systems must undergo rigorous verification, validation, and testing to ensure they perform as intended in realistic environments before deployment.

In addition, they included this “FAQ”:

What if the government just changes the law or existing DoW policies?

Our contract explicitly references the surveillance and autonomous weapons laws and policies as they exist today, so that even if those laws or policies change in the future, use of our systems must still remain aligned with the current standards reflected in the agreement.

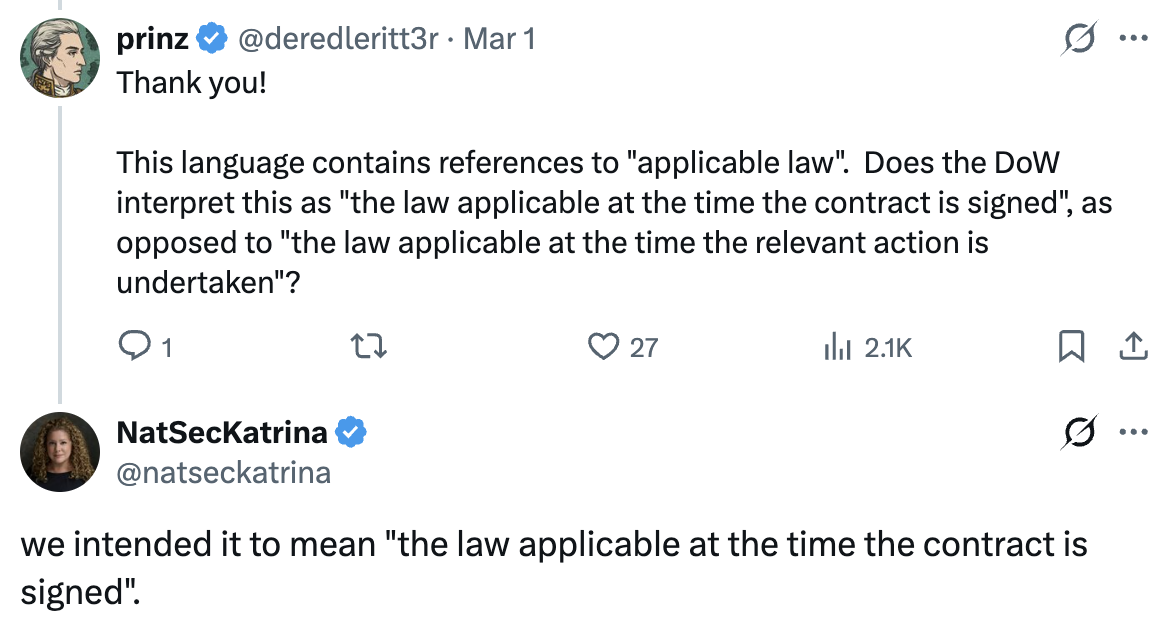

In an “AMA” (Ask Me Anything) on x.com, OpenAI CEO Sam Altman was asked about this by user @deredleritt3r:

…could you please clarify which provision in the agreement with the DoW “expressly references the laws and policies AS THEY EXIST TODAY”

Katrina Mulligan, Head of National Security Partnerships, OpenAI for Government, responded with the above text of the contract, and @deredleritt3r followed up:

This language contains references to “applicable law”. Does the DoW interpret this as “the law applicable at the time the contract is signed”, as opposed to “the law applicable at the time the relevant action is undertaken”?

To which Katrina Mulligan responded:

we intended it to mean “the law applicable at the time the contract is signed”.

In this article, we will explain why, based on the contract language shared by OpenAI, this understanding is incorrect. The contract language will be interpreted under US law to refer to the law applicable at any future time where a contract issue arises. This is a critical point, because without this protection, it is not the case that “if those laws or policies change in the future, use of our systems must still remain aligned with the current standards reflected in the agreement”.

As we shall see, multiple independent legal doctrines, spanning 150 years of Supreme Court precedent and the foundational treatise on contract law, confirm that “lawful purposes” is inherently ambulatory: it refers to the law as it exists at the time of performance, not at signing. It appears that OpenAI may have entered into a contract that does not have the protections they believed it did.

Analysis of language

We will step through the paragraph clause by clause and provide annotations:

The Department of War may use the AI System for all lawful purposes,

As we’ll see, this is the key section. It is clear that “all” lawful purposes are permitted under the contract.

consistent with applicable law, operational requirements, and well-established safety and oversight protocols.

“consistent with applicable law” is just restating the previous “lawful purposes” language. “Operational requirements” simply refers to whatever operations the department requires. “well-established safety and oversight protocols” is the fuzziest part of this sentence, since there are no such established safety and oversight protocols at present. It would be a challenging case to make a claim that the US military did not have the ability to set such safety and oversight protocols. So in practice, “may use the AI System for all lawful purposes” is the plain practical meaning of this sentence.

The AI System will not be used to independently direct autonomous weapons in any case where law, regulation, or Department policy requires human control,

This section must be read as a whole, since it contains a constraint (“will not be used to independently direct autonomous weapons”) followed by a carve-out (“in any case where law, regulation, or Department policy requires human control”). Due to the carve out, the first half of the sentence does not add a significant constraint, since the carve-out re-states the “may use the AI System for all lawful purposes” permission.

nor will it be used to assume other high-stakes decisions that require approval by a human decisionmaker under the same authorities.

This has the same constraint-then-carveout structure as the first part of the sentence, and the result is the same. “under the same authorities” refers to the “lawful purposes” outlined earlier.

The result of this sentence is not to add a significant constraint to the “may use the AI System for all lawful purposes” language.

Per DoD Directive 3000.09 (dtd 25 January 2023), any use of AI in autonomous and semi-autonomous systems must undergo rigorous verification, validation, and testing to ensure they perform as intended in realistic environments before deployment.

This is simply a statement of fact. It is describing the current DoD directive. It is not using any language to incorporate this directive into the contract itself, and is not creating any additional contractual obligations on either party. If the DoD directives change, then the permitted “lawful purposes” changes too. This is not merely a logical inference but a well-established legal doctrine, as we will see below.

In addition, the current directive is already a carve out to the constraint “AI System will not be used to independently direct autonomous weapons”; it already allows for them to be used if they “perform as intended”.

The meaning of “lawful purposes”

In the light of this analysis, let’s now look at OpenAI’s statement “Our contract explicitly references the surveillance and autonomous weapons laws and policies as they exist today, so that even if those laws or policies change in the future, use of our systems must still remain aligned with the current standards reflected in the agreement.”

The first part is true. As we’ve seen the contract “explicitly references the surveillance and autonomous weapons laws and policies as they exist today” by citing DoD Directive 3000.09.

However, the second is not true, based on the language OpenAI chose to share: “so that even if those laws or policies change in the future, use of our systems must still remain aligned with the current standards”. Specifically, the way in which the explicit reference occurs is purely as a statement of fact, and does not incorporate the language or introduce any contractual commitments. Ms Mulligan’s intention for the contract to refer to “the law applicable at the time the contract is signed” has not been successfully captured by the contract language shared.

We will now review the term “lawful purposes”, to understand why, and how, it refers to the law as it exists at the time of performance, not at signing.

Supervening illegality

The Restatement (Second) of Contracts, the seminal treatise on American contract law, directly addresses this question. The commentary to Section 264 (“Prevention by Governmental Regulation or Order”) states: “it is a basic assumption of a contract that the law will not directly intervene to make performance impracticable when it is due.” It explicitly frames lawfulness as assessed at the time of performance, not signing.

The Supreme Court affirmed this principle in Louisville & N. R. Co. v. Mottley, 219 U.S. 467 (1911). This case was about an action in 1871, when the L&N Railroad gave the Mottleys free lifetime passes as settlement for injuries. In 1906, Congress passed the Hepburn Act (an amendment to the Interstate Commerce Act) prohibiting railroads from issuing free passes. The railroad stopped honoring the passes. The Mottleys sued for specific performance, arguing the 1906 Act didn’t apply to pre-existing contracts. SCOTUS ruled against them: the subsequent federal legislation rendered the contract unenforceable.

In that case, Justice Harlan wrote for the Court that a contract cannot be enforced against a party “even though valid when made” if subsequent legislation has made it illegal. The Court reasoned that if the principle were otherwise, “individuals and corporations could, by contracts between themselves, in anticipation of legislation, render of no avail the exercise by Congress, to the full extent authorized by the Constitution, of its power to regulate commerce. No power of Congress can be thus restricted.”

This closely parallels the current discussion: OpenAI gave the DoW an AI system “for all lawful purposes.” If Congress later legislates on autonomous weapons, OpenAI cannot argue the contract locks in pre-legislation standards, just as the Mottleys could not argue their 1871 contract was immune from the 1906 Hepburn Act.

This doctrine is not contested. As Justice Harlan noted, the authorities “are numerous and are all one way.” It follows directly that “all lawful purposes” cannot be read as a static reference to the law at the time of signing. The concept of supervening illegality requires that lawfulness be assessed at the time of performance.

The government cannot contract away its legislative power

The supervening illegality doctrine applies to all contracts. But there is an additional, even more fundamental problem with OpenAI’s interpretation: one of the contracting parties is the United States government itself.

If OpenAI’s reading were correct, that the contract locks in the law as it existed at signing, it would effectively constrain Congress’s future legislative authority over AI and autonomous weapons. The Department of War, as the government’s primary AI customer, would be unlikely to support legislation contradicting its own contract, creating a de facto freeze on legislative action. This is precisely the kind of outcome the Supreme Court has rejected for over a century.

Most directly on point is United States v. Winstar Corp., 518 U.S. 839 (1996). During the savings and loan crisis, federal regulators encouraged healthy thrifts to acquire failing ones, contractually promising favorable accounting treatment. Congress then passed FIRREA (1989), eliminating that treatment and rendering the merged thrifts insolvent. The thrifts’ contracts contained a clause requiring compliance “in all material respects with all applicable statutes, regulations, orders of, and restrictions imposed by the United States”; language strikingly similar to OpenAI’s “consistent with applicable law.” The Supreme Court held 7-2 that this clause simply required the thrifts to obey future laws as they arose; it did not freeze the regulatory framework at the time of signing. The Court further held that the government retains its legislative sovereignty even when it contracts. Subsequent legislation applies regardless, and the only question is whether the government owes damages for the change, not whether the old law survives. The parallel to the OpenAI-DoW contract is direct: “consistent with applicable law” refers to whatever the law is when the contract is performed, not when it was signed.

In Stone v. Mississippi, 101 U.S. 814 (1879), the Court unanimously held that a state cannot contract away its police power (i.e its authority to regulate for the public welfare). Mississippi had granted a lottery charter in 1867, then prohibited lotteries by constitutional amendment in 1868. The lottery company argued the charter was a protected contract. The Court disagreed: the power to regulate for public welfare is inalienable and cannot be surrendered through contract.

The same principle was established two years earlier in Boston Beer Co. v. Massachusetts, 97 U.S. 25 (1877), where a corporate charter granting the right to manufacture malt liquors was held superseded by subsequent state regulation. And in Home Building & Loan Assn v. Blaisdell, 290 U.S. 398 (1934), the Court held that the Contracts Clause of the Constitution is not absolute, and must be balanced against the state’s police power when serving the public welfare.

Most recently, in Sveen v. Melin, 584 U.S. 129 (2018), the Court held 8-1 that a state could retroactively apply a new statute to pre-existing contracts without violating the Contracts Clause, reaffirming that contracts exist within a living legal framework, not a frozen one.

These cases span 140 years and remain good law. The government, whether state or federal, simply cannot bind itself by contract to refrain from future legislation.

The absence of a freezing clause

If OpenAI intended to lock in the law at the time of signing, they could have done so with explicit contractual language, rather than relying on the definition of “lawful.” Contracts of this nature get specific so as to avoid scope ambiguity.

There is a mechanism for doing so: a “freezing clause” (also called a “stabilization clause”). These are specialized contractual provisions, found primarily in international investment agreements, that explicitly state that only the laws in effect at the date of signing shall govern the agreement for its term. The existence of freezing clauses as a distinct, specialized drafting mechanism is itself powerful evidence that the default position is ambulatory. If “applicable law” and “lawful purposes” already meant “the law at the time of signing,” freezing clauses would be unnecessary. They exist precisely because, without them, contractual references to law are understood to refer to the law as it exists at the time of performance.

The contract language OpenAI chose to share contains no such clause, although it’s possible that for some reason they did include it but chose not to share it (which would be surprising, since presumably they chose to share the language that best supports their arguments in the article).

Such clauses are rare enough in government procurement that experts we spoke to were unaware of ever seeing one. Indeed in Winstar the justices made it very clear that such clauses should be assumed to not be valid. Justice Scalia’s concurrence (joined by Kennedy and Thomas) stated that: “Governments do not ordinarily agree to curtail their sovereign or legislative powers, and contracts must be interpreted in a common sense way against that background understanding.” Justice Souter’s plurality opinion stated that a contract “to adjust the risk of subsequent legislative change does not strip the Government of its legislative sovereignty.”

Even if OpenAI’s contract contained an explicit freezing clause, it is far from clear that such a clause would be enforceable against the US government. The Federal Circuit has held that the sovereign acts doctrine — the principle that the government cannot be held liable for the impact of its public and general acts on its own contracts — is “inherent in every government contract” (Conner Bros Construction Co. v. Geren, 550 F.3d 1368 (Fed. Cir. 2008), applying Winstar). A clause purporting to freeze the law would directly contradict this inherent term.

Therefore, it seems a reasonable to conclude that the phrase “all lawful purposes” refers to whatever the law permits at the time the contract is performed.

Quotes from other experts

A number of national security legal experts have come to the same conclusion – that the language of the OpenAI contract that has been shared does not appear to constrain the government or provide meaningful contractual red lines. E.g:

- Charlie Bullock, Senior Research Fellow at LawAI: “What the contract language we do have says is, essentially: DOW gets to use OpenAI’s AI system for all lawful purposes. The end. The only real contractual restriction on DOW’s ability to use OpenAI’s systems other than ‘DOW has to follow the law’ is ‘DOW has to follow Department policy.’ But DOW can, of course, change its own policies whenever it wants.”

- Alan Rozenshtein, Associate Professor at University of Minnesota Law School, Research Director and Senior Editor at Lawfare, former DOJ attorney: “I’m still trying to figure out what terms OAI agreed to, but I increasingly think they were not substantive restrictions on what DoD could do. So not sure it was much of a compromise.”

- Brad Carson, former General Counsel of the Army, former Undersecretary of the Army, and former Undersecretary of Defense: “[this] interpretation is the right one, IMO”, referring to this statement from OpenAI employee Leo Gao: “the contract snippet from the openai dow blog post is so obviously just “all lawful use” followed by a bunch of stuff that is not really operative except as window dressing.”

Many thanks to Brad Carson for his thoughtful feedback during the drafting of this article.